Joint Video and Text Parsing for Understanding Events and Answering Queries

Kewei Tu, Meng Meng, Mun Wai Lee, Tae Eun Choe and Song-Chun ZhuAbstract

We propose a framework for parsing video and text jointly, generating

narrative text descriptions and answering user queries. Our framework

produces a parse graph that represents the compositional structures

of spatial information (objects and scenes), temporal information

(actions and events) and causal information (causalities between

events and fluents) in the video and text. The knowledge

representation of our framework is based on a spatial-temporal-causal

And-Or graph (S/T/C-AOG), which jointly models possible hierarchical

compositions of objects, scenes and events as well as their

interactions and mutual contexts, and specifies the prior

probabilistic distribution of the parse graphs. We present a

probabilistic generative model for joint parsing that captures the

relations between the input video/text, their corresponding parse

graphs and the joint parse graph. Based on the probabilistic model,

we propose a joint parsing system consisting of three modules: video

parsing, text parsing and joint inference. Video parsing and text

parsing produce two parse graphs from the input video and text

respectively. The joint inference module produces a joint parse graph

by performing matching, deduction and revision on the video and text

parse graphs. The proposed framework has the following objectives:

Firstly, we aim at deep semantic parsing of video and text that goes

beyond the traditional bag-of-words approaches; Secondly, we perform

parsing and reasoning across the spatial, temporal and causal

dimensions based on the joint S/T/C-AOG representation; Thirdly, we

show that deep joint parsing facilitates subsequent applications such

as generating narrative text descriptions and answering queries in

the forms of who, what, when, where and why. We empirically evaluated

our system based on comparison against ground-truth as well as

accuracy of query answering and obtained satisfactory results.

Examples

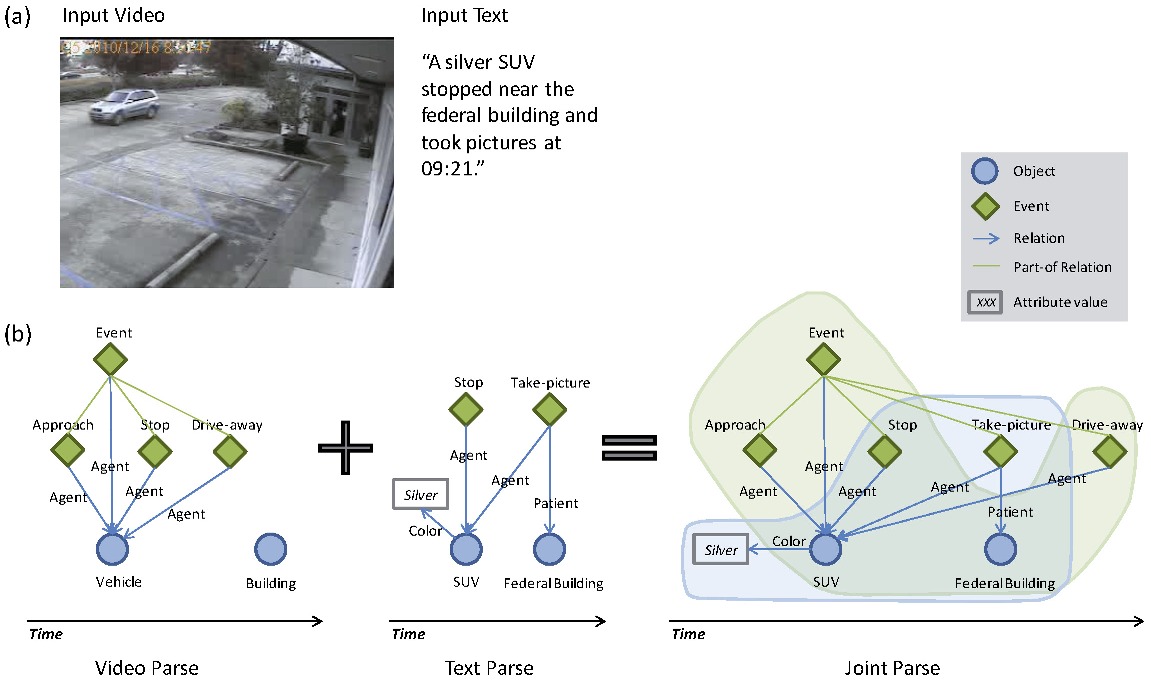

Example 1: (a) An example surveillance video and the text descriptions of a parking lot scene. (b) The video parse graph, text parse graph and joint parse graph. The video and text parses have significant overlap. In the joint parse graph, the green shadow denotes the subgraph from the video and the blue shadow denotes the subgraph from the text.

Example 2: (a) An example surveillance video and the text descriptions of a courtyard scene. (b) The video parse graph, text parse graph and joint parse graph. The video and text parses have no overlap. In the joint parse graph, the green shadow denotes the subgraph from the video and the blue shadow denotes the subgraph from the text.

Demo

Publication

Kewei Tu, Meng Meng, Mun Wai Lee, Tae Eun Choe and Song-Chun Zhu, "Joint Video and Text Parsing for Understanding Events and Answering Queries". In IEEE MultiMedia, vol. 21, no. 2, pp. 42-70, 2014.(arXiv)

The work is supported by the DARPA grant FA 8650-11-1-7149, the ONR grant N000141010933 and the NSF CDI grant CNS 1028381.