Unsupervised Structure Learning of Stochastic And-Or Grammars

Kewei Tu, Maria Pavlovskaia and Song-Chun ZhuAbstract

Stochastic And-Or grammars compactly represent both compositionality

and reconfigurability and have been used to model different types of

data such as images and events. We present a unified formalization of

stochastic And-Or grammars that is agnostic to the type of the data

being modeled, and propose an unsupervised approach to learning the

structures as well as the parameters of such grammars. Starting from

a trivial initial grammar, our approach iteratively induces

compositions and reconfigurations in a unified manner and optimizes

the posterior probability of the grammar. In our empirical

evaluation, we applied our approach to learning event grammars and

image grammars and achieved comparable or better performance than

previous approaches.

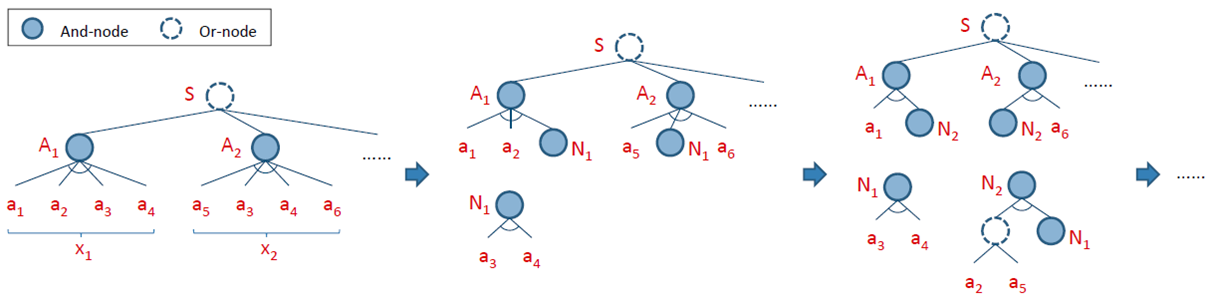

Algorithm Overview

Framework

- Start with the maximum-likelihood grammar (simply the union of all the training samples)

- Repeat:

Add a new grammar fragment and use it to reduce training samples s.t. the posterior is maximally increased

Until no more fragment can be learned

A grammar fragment is a set of grammar rules that are rooted at a new nonterminal node and specify how the new nonterminal node generates one or more configurations of existing nodes.

And-Or Fragment

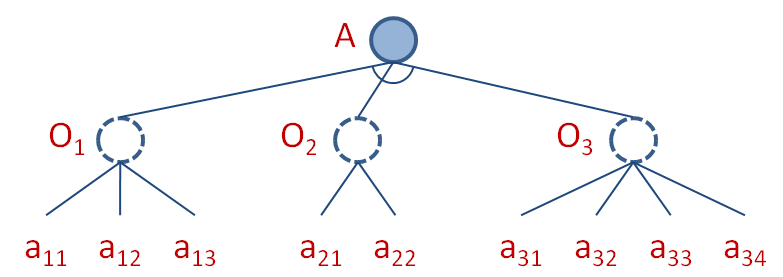

We propose to search for And-Or fragments in the algorithm. An And-Or fragment contains:

- A new And-node as the root

- A set of new Or-nodes under the And-node

- A set of existing nodes under each new Or-node

By learning with And-Or fragments, we induce compositions and reconfigurations in a unified manner that is more efficient and robust than learning with other types of grammar fragments. The posterior gain of adding an And-Or fragment can be efficiently computed based on a set of sufficient statistics.

Publication

Kewei Tu, Maria Pavlovskaia and Song-Chun Zhu, "Unsupervised Structure Learning of Stochastic And-Or Grammars". In Advances in Neural Information Processing Systems 26 (NIPS 2013), Lake Tahoe, Nevada, USA, December 5-10, 2013. (Supplementary material)(Poster)

Datasets

The links to the datasets used in the experiments of our paper:- Human activity data: coming soon...

- Synthetic dataset of animal face sketches

- Real dataset of animal faces

Source Code

Download here: jAOG v0.1

The work is supported by grants from DARPA MSEE project FA 8650-11-1-7149, ONR MURI N00014-10-1-0933, NSF CNS 1028381, and NSF IIS 1018751.